Under the support of the DARPA ITO Software for Distributed Robotics (SDR) and Autonomous Mobile Robot Software (MARS) projects, a panoramic virtual stereo vision approach for localizing 3D moving objects has been developed in the Department of Computer Science at the University of Massachusetts at Amherst. This research focuses on cooperative behavior of robots involving cameras (residing on different mobile platforms) that are aware of each other, and can be composed into a virtual stereo sensor with a flexible baseline in order to detect, track and localize moving human subjects in an environment. In this model, the sensor geometry can be controlled to manage the precision of the resulting virtual sensor. This cooperative stereo vision strategy is particularly effective with a pair of mobile panoramic cameras that have the potential of almost always seeing each other, since each of the panoramic cameras has a full 360-degree field of view (FOV). Once calibrated by "looking" at each other, they can view the environment to estimate the 3D structure of the scene by triangulation.

In the two-robot scenario for human searching and tracking, one of

the robots is assigned the role of "monitor" and the other the role of

"explorer". The role of the "monitor" is to monitor movements in the

environment, including the motion of the "explorer". One of the reasons

that we have a "monitor" is that it is advantageous for a stationary

camera (mounted on the "monitor") to detect and extract moving objects

from the background scene in real-time. On the other hand, the

role of the "explorer" is to follow a moving object of interest and/or

find a "better" viewpoint for constructing the virtual stereo geometry

with the camera on the "monitor". The latter reflects the unique

feature of our "virtual stereo" approach.

Mutual awareness of the two robots is necessary to dynamic calibrate the camera geometry, that is, to determine their relative orientations and the distance between them. In the current implementation, we have designed a cylindrical body for each robot with known radius and color so that it is easy for the cooperating systems to detect each other. In the future, we are planning to explore possible ways for the robots to recognize and localize each other by their natural appearances. It is also helpful to share information between the two panoramic imaging sensors, since they have almost identical geometric and photometric properties. For example, when moving objects are detected and extracted by the stationary "monitor", it can pass information about these objects, such as a unique identifier as well as geometric and photometric features of each object to the explorer (who may be in motion). Thus it makes the job of the "explorer" easier when it searches for and track the same set of objects.

View planning is applied whenever there are difficulties in object detection due to occlusion and problems in distance estimation due to adverse view configuration. Since both the robots and the targets (humans) are in motion, we define view planning as the process of adjusting the view point of the exploring camera so that best view angles and baseline can be achieved dynamically for the monitoring camera in order to estimate the distance to the target of interest. Note that the explorer is always trying to find a best position in the presence of a target's motion.

This research addressed the following issues: 1) off-line panoramic camera calibration and real-time image unwarping, 2) dynamic self-calibration among the two cameras on two separate mobile robots, which forms the dynamic "virtual" stereo sensor, 3) view planning by taking advantage of the current context and goals, 4) detection and 3D localization of moving objects by two cooperative panoramic cameras, and 5) the correspondence of objects between two views, given the possibly large perspective distortion. For view planning, the distance estimation error is modeled as a function of dynamic calibration error, image match error and triangulation error. An optimal procedure helps to find the best viewpoints of the two cameras for estimating the distance of an object. Dynamic calibration is carried out by using the cooperative sensor (robot) as each other's calibration target. A 3D match algorithm is proposed to eliminate the ambiguity of matches of 2D images of two largely separated cameras by checking the 3D consistency of the matches. The communication among sensors is realized via a wireless Internet connection.

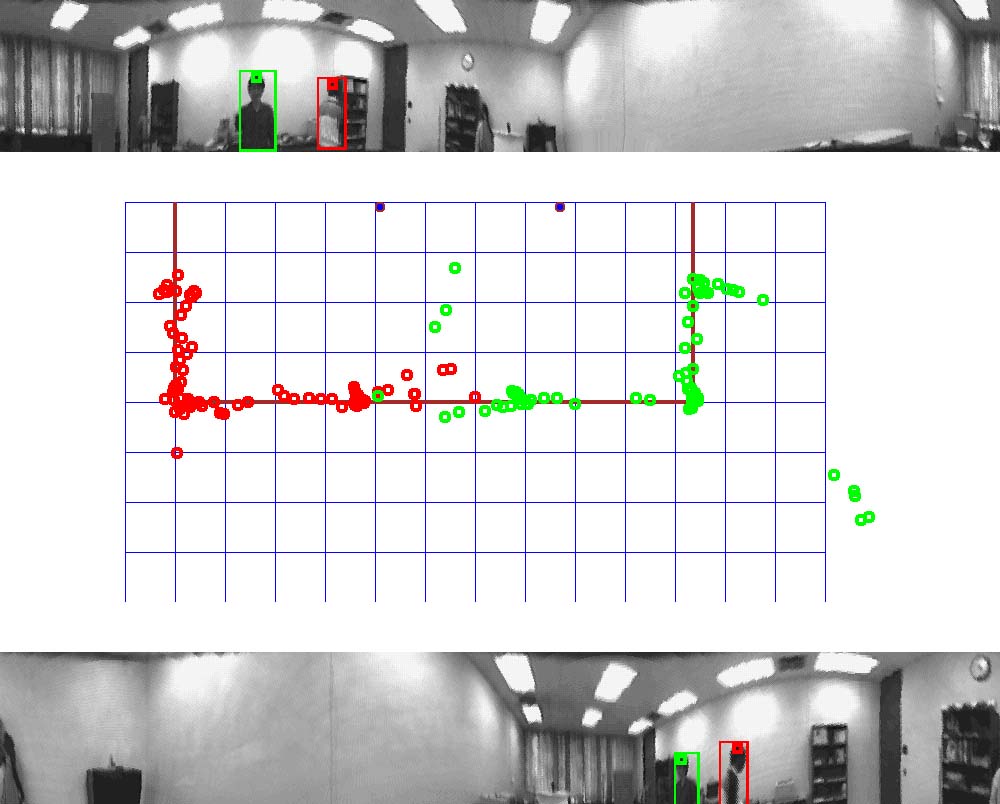

Fig. 2. Tracking results of the panoramic virtual stereo system. Top: an image from the first panoramic camera; Bottom: the corresponding image from the second panoramic camera. Center: a 2D map shows that two people are walking from opposite side of a pre-defined rectangular path. The red dots show the trajectory of the first person and the green dots show that of the second person. For detail description, please see the powerpoint presentation.

More about Panoramic Human Tracking

Edward M. Riseman, Professor

Allen R. Hanson, Professor

Roderic Grupen, Associate Professor

Deepak Karuppiah, Ph.D. student

Yong-Ge Wu, graduate student

Rome Labs (via DARPA), EDCS Self Adaptive Software (SAFER AFRL/IFTD F30602-97-2-0032) - Tactical Mobile Robots, 07/98 - 08/99DARPA/ITO, Mobile Autonomous Robot S/W (MARS DOD DABT63-99-1-0004) - A Software Control Framework for Learning Coordinated, Multi-robot Strategies in Open Environments, 05/03/00-05/02/02

DARPA/ITO Software for Distributed Robotics (SDR DOD DABT63-99- 1-0022) - Software, Programming, and Run-Time Coordination for Distributed Robotics, 08/23/99-11/30/01